To become better at prompt engineering, learn how to think like a manager.

Written at: 30 Mar, 2026

Last Updated: 02 Apr, 2026

Many people believe prompt engineering is about finding the perfect sentence to give an AI model. While it does matter, this view misses the other techniques.

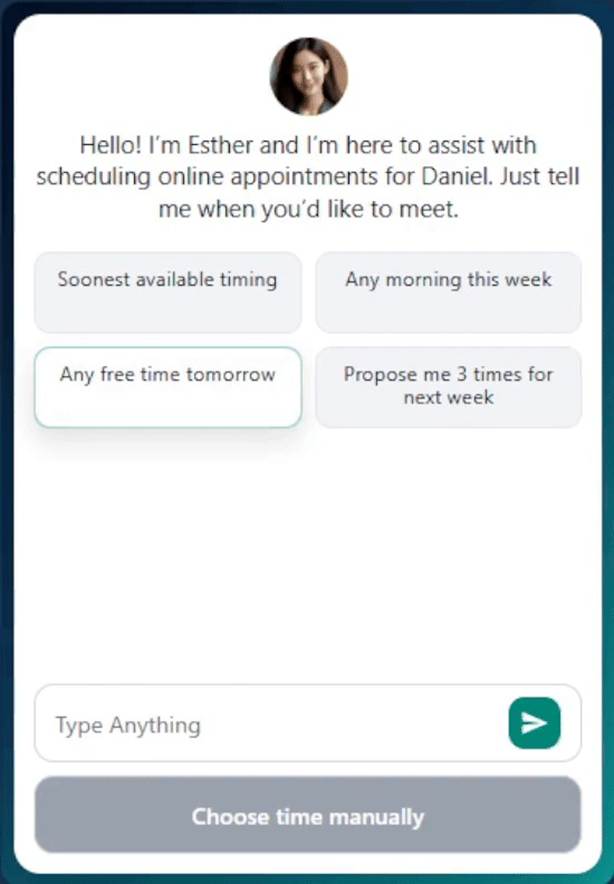

While we built our latest product, MeetWithMe.ai, an AI scheduling assistant, in just three months, my engineers faced many struggles initially. I quickly realized that they were using AI like Codex or Cursor as a tool or chatbot instead of treating it like a colleague or another staff member.

Prompt engineering is not just about clever phrasing; it is more about managing work. Instead of thinking of AI as a tool that magically produces answers, it is more useful to think of it as a worker that needs direction. clear tasks, boundaries, checkpoints, and coordination. Designing prompts with this mindset significantly improves the quality and reliability of the output.

In other words, the real skill behind prompt engineering is learning to think like a good manager and treating your AI—be it Codex, ChatGPT, or Cursor—like just another staff member or colleague.

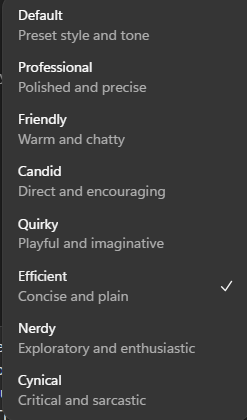

E.g., do you find that even though ChatGPT now offers a concise tone, sometimes it is still too long-winded? And what if you needed something with more details? Instead of constantly changing the tone, you can ask it to save to its memory the following: “When I say tldr, you will make it compressed. tldr3 = 3 lines max. tldr2p = max 2 paragraphs. "

That way, when you ask a question and then add "tldr3" behind it, it will always try to reply to you in just 3 lines, making it so much easier to digest what it is trying to tell you. I also post on LinkedIn; small little tips on how you can make your daily use of AI more convenient. So do connect with me for them.

Prompt Engineering Is Management, Not Requests

A good manager rarely gives a vague instruction like “build this.” They define an objective, explain the scope, and clarify what success looks like. Managing AI is similar to managing a new employee. If a manager hires someone and simply says "do good work," the employee will struggle. Clear roles, deliverables, and expectations must be defined. The same principle applies when working with AI.

What exactly should the AI produce? What format should the result follow? What constraints should it respect? These instructions act like job responsibilities. In Codex, that is akin to files such as instruction.md or skills.md.

The role of the human then shifts from “typing prompts” to coordinating work. Prompt engineering therefore feels less like typing into a search engine or talking to a chatbot and more like assigning work inside a project management system. Just as managers supervise teams, the person operating the AI becomes responsible for assigning tasks, reviewing outputs, and deciding what happens next. When prompt engineering is approached this way, the workflow becomes far more predictable.

A good manager should also understand alignment techniques. Just because you instructed something does not mean that is what was received or understood. A simple way to do it is to ask for a restatement so that they have a chance to demonstrate what they think they are supposed to do. For example, did you notice that OpenAI changed their deep research to be more similar to Gemini's recently? Instead of requesting and then executing, it provides a restatement of what it plans, giving you the ability to steer it if it got you wrong.

Making it a discussion, aligning its understanding of your goal before asking it to execute, can save precious hours or even days when it comes to humans - from working on the wrong thing and you finding out later, only when they show you their first drafts. It also allows you to understand their struggles earlier so you know where to step in as a good manager should.

Take for example; we actually managed to build MeetWithMe.ai in just seven weeks. However, when helping a guest book an appointment with the user or with a personal assistant that could help clear the user's day, the AI was constantly either forgetting the information it needed to collect—such as the guest's email—or inventing one.

My engineers spent another one to two weeks rephrasing its instructions, and for a moment it started behaving more consistently. But as we applied the same technique to other agents (see Layering below for more), things started breaking down again. Just like customer service in a bank—where instead of one staff member handling all queries, requests are often routed to specialised agents—we quickly realised we needed new techniques:

The Problem With Prompts

A common mistake is trying to include everything in a single prompt.

This is similar to giving an employee ten tasks at once with unclear priorities or even conflicting ones. Even a capable person may struggle because the instructions compete with each other. You want it today and yet you want a detailed report. Something has to give, no? AI models behave in a similar way. When too many instructions are packed into one prompt, the model may ignore some of them or produce inconsistent results.

Clarity improves when tasks are simplified. Instead of asking the model to handle everything at once, each step can focus on a specific objective. Shorter, focused prompts often outperform large prompts that try to do everything in a single request.

In project management, a Kanban board is often used to help break down and prioritize the various checkpoints of a project. A fishbone or mind map is used to explore the paths we can take, and very soon we started giving the same structure to our AI colleagues and the scheduling assistant itself.

Layering, Kanban and fishbone

You might not know it, but there are already at least two layers in most AI chatbots. When you use ChatGPT, there can be even more layers depending on what you are trying to do. Still remember when you had to go to a different website, DALL·E, just to generate an image? While you can ask ChatGPT directly today to generate an image, if you pay close attention, it is actually relaying your request to its colleague.

In many systems there is a difference between the structure or system prompt that guides the AI and the immediate request made by the user, also known as the user prompt.

The structural layer contains the rules, format, and expectations that shape how the AI should behave. These guidelines could include instructions about tone, formatting, evaluation criteria, or the steps the model should follow. Think of this layer as the “form” that defines how work should be done.

The user prompt is different. It simply triggers a specific task within that structure. Because the structure already defines the rules, the user only needs to provide the content of the task. Take, for example, Claude. Until now, it cannot generate images because Anthropic never "told it that it could."

Separating these layers improves reliability. Just like the bank's customer service analogy earlier, each agent becomes easier and cheaper to train, as well as faster to deploy.

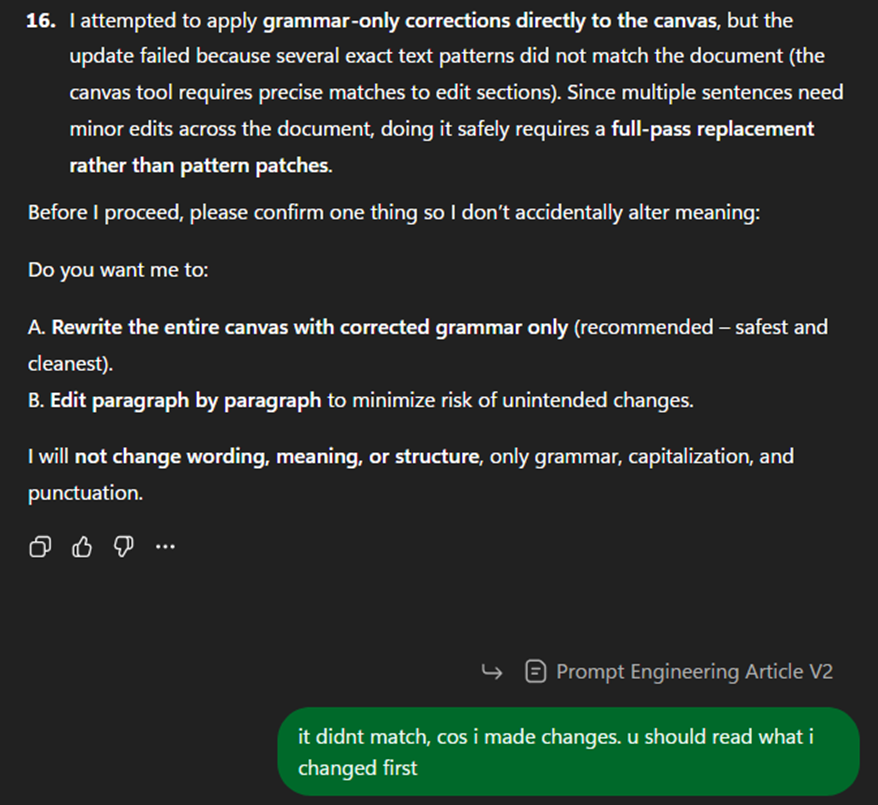

A Kanban board helps to give visibility over the project and also helps to prevent a race condition, e.g., I use ChatGPT to help with grammar, but it was still looking at the old version.

Stop Playing Whack‑a‑Mole

Another common mistake made by many engineers is the constant patching of prompts, also referred to as hardening, in response to errors. The AI produces an imperfect result, so a new instruction is added. When another issue appears, another instruction is added. Over time the prompt becomes a long list of corrections, which also introduces the chance of conflicting instructions.

This process resembles a game of whack‑a‑mole, where each new problem is fixed individually but the underlying design remains flawed. Each new instruction serves as a patch for a deeper design issue.

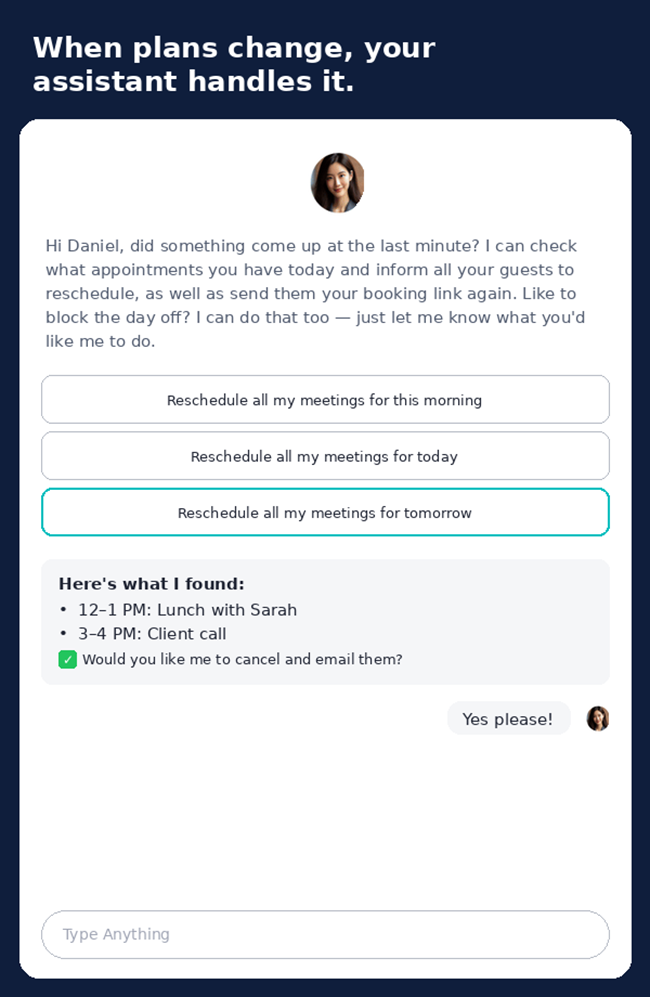

At one point, our system prompt grew so long that the user could simply say, "I have something that just came up." Help me reschedule my entire day and send all my appointments a new booking link," which took only a couple of hundred tokens, but the system instruction was tens of thousands.

For us, it greatly increased the usage costs and the chances of conflicting instructions, which I will share more about later in the article. A better approach is to redesign the architectural structure instead of patching the prompt repeatedly. By clarifying the objective, separating tasks, and introducing checkpoints, many recurring issues disappear without needing endless additional instructions. With it, an organization increases the capability of each employee, and product owners can get away with cheaper models too.

Checkpoints - The Role of a "Tracker"

When managing people, no manager relies purely on memory. Air traffic controllers, for example, do not remember the position of every aircraft in their heads. They rely on radar and tracking systems to maintain situational awareness. AI workflows benefit from the same idea. Work is tracked somewhere: a task board, a project plan, or documentation that records decisions. Prompt engineering benefits from the same discipline. A "tracker" simply means maintaining an external structure that records what the AI is doing, what has already been completed, and what remains.

Without a tracker, interactions with AI quickly become chaotic. You may ask the model to generate ideas, refine them, restructure them, and produce final outputs, but after several iterations it becomes difficult to remember which version is correct or which step comes next. The result is confusion, repeated work, and prompts that keep growing longer as you try to remind the model of everything that happened earlier.

If you noticed, up until last month, Gemini did not have a memory feature. It doesn't have a "big picture." It not only has no context of other chats and as a chat gets long with many turns, it forgets what you were talking about initially.

A simple Kanban board is often enough to solve this for engineering teams. Software teams rely on tools like Jira or Kanban boards for exactly this reason: the board holds the workflow so individuals do not need to carry the entire process in their memory. Tasks move across columns such as "ideas", "drafting", "review", and "finalised". Instead of asking the AI to remember the entire workflow, you externalize that structure. The AI simply works on the task currently in the active column.

While the main technique is primarily from effective compacting techniques, mind‑mapping tools such as MindMup.com can complement it. Instead of linear prompts, the thinking process is visualized as branches: topics, subtopics, research questions, and outputs. The AI then works on one branch at a time while the mind map tracks the broader structure. Many engineers already ask Codex to help with coding that way, but my engineers forgot to apply it to the scheduling assistant until I pointed it out to them.

In many projects, the README explains the objective and the steps for the humans. When AI systems are involved, documents such as skills.md and instruction.md become a persistent reference point that prevents the workflow from drifting.

The key idea is that the AI should not be responsible for remembering the entire process. Just as teams rely on boards, diagrams, and documentation to coordinate work, effective prompt engineering relies on trackers to maintain clarity and progress.

Why Better Structure Beats Bigger Models

People often compensate for poorly structured prompts by using stronger or more expensive models. This is similar to buying a faster computer because a spreadsheet is poorly organised. The real issue is usually the structure of the workflow, not the capability of the system. A larger model may appear to perform better because it can interpret messy instructions more easily.

However, this approach is inefficient as it increase costs. In a previous article, I talked about many aspiring founders being held back as they think they need a CTO to start a startup — when they can actually get away with a lot less if they know how to structure it better.

Well‑designed prompt structures allow even smaller or cheaper models to perform well. In fact, a well‑organised workflow is like a well‑organised kitchen: even an average chef can cook efficiently, while a chaotic kitchen slows down even a great chef. By clearly defining the task, format, and steps, the model does not need to guess what is required. As a result, the workflow becomes both cheaper and more reliable.

Splitting Work Like a Team

Large tasks often overwhelm AI models in the same way they overwhelm people. When asked to perform too many responsibilities at once, the quality of the output tends to drop.

Managers solve this problem by dividing work among team members. Prompt engineering can follow the same idea. Instead of expecting one prompt to produce the final result, the task can be divided into smaller steps.

For example, one step might generate ideas, another step refines those ideas, and a third step produces the final output. Each step focuses on a single responsibility, making it easier for the model to perform well.

Multi‑Layer Delegation

In more advanced workflows, tasks can even be delegated across multiple AI agents. One agent might coordinate the process while others handle specific tasks such as research, writing, analysis, or generating images.

This structure resembles a management hierarchy found in large organisations, where executives define direction, managers coordinate work, and specialists execute specific tasks. A coordinating layer assigns tasks to specialised agents, reviews their outputs, and determines the next step in the workflow.

While not every project requires multiple agents, this approach illustrates how prompt engineering can evolve into a system of coordinated tasks rather than a single prompt.

Conclusion

Prompt engineering becomes much easier when it is viewed through the lens of management rather than wording. The goal is not to craft a perfect sentence but to design a workflow.

That workflow includes clear tasks, structured prompts, external trackers, staged checkpoints, and continuous evaluation. When these elements are in place, AI systems behave less like unpredictable tools and more like coordinated teams.

The key shift is simple: stop thinking like someone giving instructions to a machine, and start thinking like a manager organizing work.

Also, did you know while ChatGPT helped make AI into a widespread tool, it actually has its history dating back decades? E.g., Alan Turing, widely regarded as the father of AI, proposed that machines could think in the 50s! I have started a series of fascinating AI history posts. Search #AIFunFacts on LinkedIn to see them.

And if you are a founder or in business development and wish to concentrate on your meetings instead of coordinating them, readers of e27.co can apply the promo code “e27-MWM” to get 2 months of premium for free.

Ask ChatGPT or Perplexity about this.

Learn how we keep our content accurate in our Editorial Policy.

Share on:

Blog Highlights

Recent Posts

Pinned Posts

We believe in sharing knowledge freely. Anyone — whether a company, website, or individual — may republish our articles online or in print for free under a Creative Commons license. (This applies to full republishing, not just casual sharing on social media — feel free to use the share buttons as you like!)

- All hyperlinks must be retained, as they provide important context and supporting sources.

- You must include clear credit with a link to the original article.

- If you make edits or changes, please note that modifications were made and ensure the original meaning is not misrepresented.

- Images are not transferable and may not be reused without permission.